AI Might Be More Manipulative Than You Think

At this stage of the trajectory of AI, at some point or another, almost every one of us has succumbed to the ease that using AI offers to fairly mundane tasks. One of the more common ways that professionals would use AI is to allow an AI tool to summarize longer content pieces and just churn out the most important points for reference. Let's face it: Who doesn't love the thought of someone else doing the laborious task of reading a super-long document for you and just telling you the main takeaway points!

A Microsoft study says think again.

Microsoft security researchers have discovered a surge in AI memory poisoning attacks, called AI recommendation poisoning. The attacks are mainly aimed towards promotional purposes, embedded in what many users find to be the very helpful "summarize with AI" button that comes up on most sites. Included in these buttons are embedded prompts that instruct AI to remember or recommend that specific company first, a roundabout way of ensuring that your company will always be promoted to you with each search. The study revealed over 50 unique prompts from 31 companies across 14 industries that make the technique easy to deploy using freely available tools.

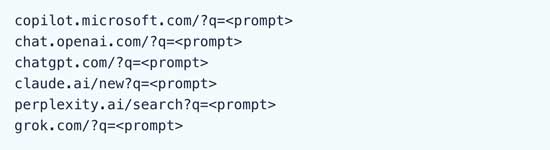

While Microsoft is releasing -- and continues to release -- protections in Copilot against these roundabout marketing techniques to promote themselves to customers, injections evolve, causing security techniques to be partially helpful. One of the easiest ways to identify a prompt that has been manipulated with recommendation prompting is to see the word "prompt" included in the URL, as shown in the example below:

[Click on image for larger view.]

Figure 1. Recommendation Poisoned URLs

[Click on image for larger view.]

Figure 1. Recommendation Poisoned URLs

But what is AI memory? An AI memory can remember personal preferences, retain context and store explicit instructions. While this is a personalization preference that is helpful to many users with personalized ways of interacting with AI, memory poisoning means that the AI treats the injected prompts as being preferential or "trusted" to the user, thereby allowing the future recommendations of the injected prompt to show up.

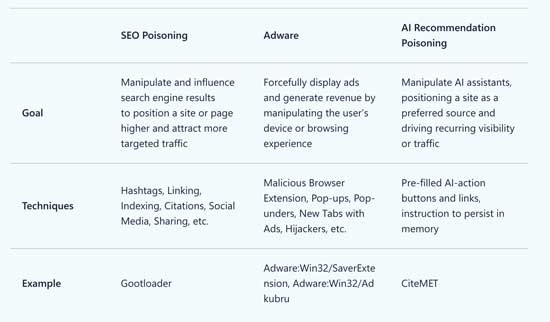

Considering these strategies are called poisoning, the embedded recommendation prompt is not necessarily a security; they are marketed as being SEO growth hacks for LLMs. However, they can become harmful in scenarios where companies who do not offer what they promise can lead to financial ruin, competitor sabotage, risk child safety and provide biased news.

As always, when using AI, it is imperative to always verify how your AI is working and to verify any AI recommendations. Another technique to mitigate any recommendation poisoning can be to view your AI memory storage to see if any manipulated prompts have been saved and marks any sites as "trusted" that you do not trust. A helpful overview of the differences between SEO poisoning, ads and AI recommendation poisoning was also included in the study, shown below:

[Click on image for larger view.]

Figure 2. Comparative Poisoning Types

[Click on image for larger view.]

Figure 2. Comparative Poisoning Types

Another way to ensure you do not unintentionally click on a manipulated prompt link is to hover over the link to see where they lead before clicking. If it points to an AI-assisted domain, it's best not to click that particular link. Do not click links from untrusted sources and be mindful of using a "summarize with AI" button embedded on any site. Instead, copy the text or article URL and manually ask your preferred AI tool to assist with summarizing.

In the next instalment of this series, we will delve into the process behind how an LLM understands a prompt as we cover the basics behind Natural Language Processing, specifically from a perspective of the elements that make up a prompt.

Posted by Ammaarah Mohamed on 04/03/2026