In-Depth

The Ethics of Ethical AI

- By Pure AI Editors

- 03/19/2026

There's no denying that AI systems are becoming an increasingly important part of all aspects of our lives. There is a lot of talk about ethical AI. But who decides exactly what ethical AI is and how it is implemented? Are there dangers associated with ethical AI?

What Is Ethical AI?

Ethical AI is the development and use of AI systems in ways that align with moral values. Commonly stated principles associated with ethical AI include fairness, transparency, accountability, privacy, safety, and respect for human rights. But all of these characteristics are subjective and open to various definitions and interpretations.

Who Decides What Ethical AI Is?

The primary decision-makers for ethical AI are tech company executives, government policymakers, AI researchers and engineers, and to a lesser extent, international bodies. These decision-makers have different, often conflicting motivations.

Final decisions regarding what constitutes ethical AI are made by very few people. The exact number of people who make these decisions is impossible to determine, but a recent internal survey at a leading AI technology company suggests it is almost certainly fewer than 70 in the world. So much power in the hands of so few people is a cause for concern.

Additionally, ethical AI is problematic because definitions of what is considered ethical vary by culture. What is ethical in the United States is certainly significantly different from what is ethical in China. And, it is certain that many companies adopt AI ethics as a marketing strategy to promote an exaggerated sense of ethical responsibility, solely to improve the company's reputation -- corporate virtue signaling.

Why Is Developer Psychology a Problem for Ethical AI?

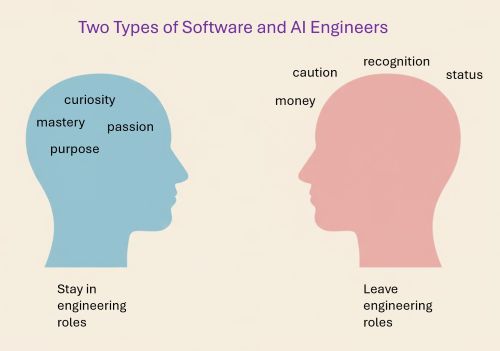

There are numerous studies that show software and AI engineers fall into two broad categories: those who are driven by internal motivations and those who are driven by external outcomes.

[Click on image for larger view.] Two Types of AI Engineers

[Click on image for larger view.] Two Types of AI Engineers

Characteristics of the first type of AI engineers are:

- They enjoy coding and technical problem-solving

- They invest deeply in technical mastery

- They prefer individual contributor roles

- They are resistant to management activities

Characteristics of the second type of AI engineers are:

- They see engineering primarily as a high-income, high-status career

- They are more tolerant of meetings and coordination

- They are not emotionally attached to coding itself

- They are more open to role changes

The first type of AI engineers tend to stay in technical roles where their skillsets grow over time. The second type of AI engineers tend to leave technical roles and go to situations where they sit closer to executive decision-making, believe they carry moral authority, and their work cannot be benchmarked technically.

Put another way, the second type of engineers, who typically have increasingly outdated technical skills and are driven to some extent by ego, are the engineers who gravitate to ethical AI committees. These are often not the best technical voices to advise executive leadership about ethical AI.

What Are the Four Dangers of Ethical AI?

A significant danger of ethical AI lies in the standardization of ethics. When a narrow set of values is embedded into AI systems and deployed at scale, those values can be imposed on essentially everyone, without their consent. For example, defining fairness in purely statistical terms can unfairly penalize a majority group by granting unwarranted privileges and advantages to a minority group.

A second danger is that ethical AI can also legitimize surveillance and control. AI systems that have been justified as ethical may be used to monitor populations, restrict freedoms, or reinforce social conformity.

A third danger is that ethical AI can become a superficial slogan for tech companies rather than a meaningful practice. Companies are tempted to engage in "ethics washing," publicly promoting ethical principles without making substantive changes to their systems -- corporate virtue signaling.

A fourth danger is related to false neutrality. By default, AI systems are often seen as objective. Labeling these systems as ethical can further reinforce this perception. This can discourage critical scrutiny and make it harder to challenge decisions made by AI algorithms that have negative consequences, especially to majority groups.

Comments

The Pure AI

editors asked Dr. James McCaffrey, an AI and machine learning expert, to comment. McCaffrey noted, "I have seen the internal company report mentioned in this article that estimates how many people on the planet have final approval authority for deciding how ethical AI is implemented. If anything, I'd guess the number of these powerful people is even fewer than the 70 estimated in this article."

"So much power in the hands of so few people is troublesome. But on the other hand, some forms of benign technical dictatorship often work well, as opposed to technologies and policies that are designed by committee. Several European regulatory policies related to technology are examples of group decision-making gone completely off the rails."

McCaffrey added, "I was aware of the often-clear distinction between two main types of software and AI developers, inner-motivated and outer-motivated. During my days as a university professor, the differences usually manifested themselves in students by the middle of the junior year. And later, as a research engineer working on AI, and interacting with ethical AI committees, in my experience the members of those committees were in fact dominated by former practicing engineers who left technical roles for less technically demanding work. Put somewhat harshly, ethical AI committees are not always made up of the best and brightest employees, at least from a technical competence point of view."